I’m Christian and this is my bi-weekly (fortnightly?) newsletter with interesting content and links orbiting the world of graph.

If you’re new here, hello and welcome! Pull up a chair. Do you want some coffee? How’s your day going? This week I’m covering an interestingly graphy take on the Turing Test you’ve probably know all about. I’ve also collated a number of interesting projects plucked from the internet — let’s get to it.

Graphs

As some of you source/target old-timers (sourcerers? targeteers?) may recall I originally expected the newsletter to dig into releases and updates to graph technologies. Six months in, I’m finding myself less interested in that and focused more on the intersection between graphs and other topics. I think the result is a newsletter that’s little more compelling than just a record of point releases from graph database vendors.

For the graph database vendors reading, ignore the above. I love your point releases and read every single changelog, I promise.

I also don’t want to be someone pretending to know absolutely everything about graphs. The edict of “write what you know” is more apparent to me than ever and there’s a lot I don’t know about graphs. source/target is a great outlet for me to learn new things. My intent is to bring you, dear reader, along for the ride.

The domain of graph drawing is a particularly deep one that I’ve barely scratched the surface here. Thanks to Patrick Mackey for drawing my attention to the recent paper entitled “The Turing Test for Graph Drawing Algorithms” by Helen Purchase et al.

You’ve probably heard of the Turing Test, especially if you’ve seen Blade Runner (I haven’t). If not, let’s look to Wikipedia:

a test of a machine’s ability to exhibit intelligent behaviour equivalent to, or indistinguishable from, that of a human.

Reasonable enough. Out of interest, here’s how Simple Wikipedia describes it:

a test to see if a computer can trick a person into believing that the computer is a person too.

It’s a definition of broad strokes but I like this version a lot. The spin that the computer is misleading humans is delightful.

The Turing Test is one of those evergreen scientific concepts referenced and applied across a gamut of different topics. I think of it as Schrödinger’s Cat but for Computer Science rather than Quantum Mechanics.

“When I hear about Schrödinger’s cat,” Stephen Hawking once said, “I reach for my gun.” By the way, when did naming something after or invoking Turing become such a lazy attempt at implying intelligence? Was it Turing Pharmaceutical?

2014’s Alan Turing biopic “The Imitation Game” starring Benedict Cumberbatch takes its name from the original paper that introduced Turing’s test: “Computing Machinery and Intelligence.” There is some disagreement on whether the paper describes a single or multiple forms of Tests but in a nutshell the idea is the same: can we be tricked by machines to think they are human?

Alright, alright this was just an excuse to shoehorn that awful graph pun into the title

In “Most Human Human” Brian Christian ponders the boundaries of the Turing Test. Christian describes his research and preparation to enter the competition known as the Loebner Prize—a sort of championship to build the best-performing chatbot to pass a restricted form of the Turing Test. The title of the book comes from the twisted prize he intended to compete for: the most convincingly human contestant–chatbot or actual person–is awarded the title of “most human human.”

By focusing on the aim of tricking judges to believing that a chatbot is human the Loebner Prize is incidentally closer to the Simple Wikipedia definition of the Turing Test, especially considering a number of restrictions applied to the format.

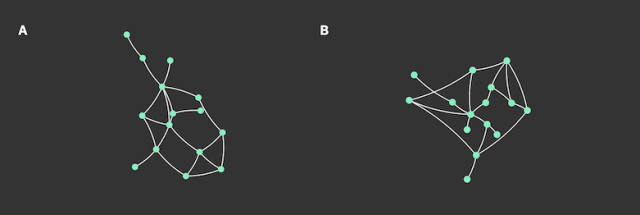

Purchase et al. have applied the idea of the Turing Test to the drawing (or arrangement) of nodes and relationships in a graph. Can a human be tricked to believe that a graph layout was manually arranged?

In our experience as graph drawing researchers, it is often preferable to draw a small graph ourselves, how we wish to depict it, than be beholden to the layout criteria of automatic algorithms. The question therefore arises: are automatic graph layout algorithms any use for small graphs? Indeed, for small graphs, is it even possible to tell the difference?

The authors went to admirable lengths to make the test as fair as possible. They:

- Collated a “balanced split” of graph data

- Drew from each of the major families of graph drawing (force-directed, stress-based, circular, orthogonal) to automatically draw each graph

- Manually drew 72 graph drawings for comparison

- Built a custom online experimental system to perform the experiments with consent, randomization, breaks and timed the decision process

- Aggregated 4364 independent decisions from 46 participants.

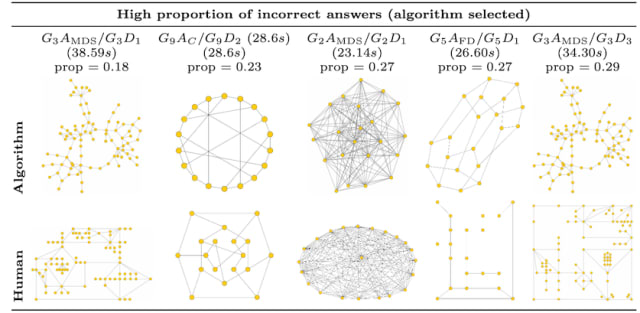

The conclusion reached by Purchase et al. was as follows:

In general, over all graphs and algorithms, participants can correctly distinguish hand-drawn layouts from algorithmically created ones: graph drawing algorithms (in general) effectively fail the Turing Test.”

There are a few caveats to this conclusion:

However, we did not find evidence that force-directed and (marginally) [“stress-based”] algorithms could be reliably distinguished from hand-drawn layouts – they therefore effectively ‘pass’ the Turing Test. We speculate that this is the case because of the prevalence of these algorithms in the popular media (e.g., for depicting social networks)

Taking the opportunity to canvas their audience, the authors also asked their participants a supplementary question: which of the two graphs was better? Deliberately not defining “better” they looked to record the subject’s instincts when it came to subjectively preferring graphs.

The participants were found to, on average, prefer the human drawing. Of particular interest were the results for orthogonal drawings which were both considered “worse” than hand-drawn graphs and did not pass the graph drawing Turing Test.

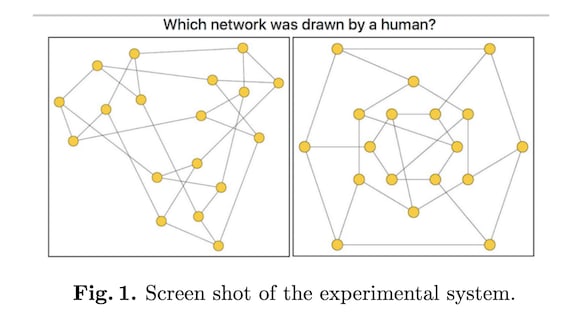

How do you think you’d fare in this test? Which of these two graphs is drawn by a human and which by an algorithm?

I’ve put together a short, completely unscientific experiment. Click here to make your guess and find out the answers.

Links

Built from scratch with React + SVG manipulation Graphisual (video) from Lakshya Thakur is a pretty good attempt at a graph prototyping web app

Friend of the newsletter (Sourcerer) Dave B showcased visualizing a Neptune Graph Database using VisJS & SageMaker in a recent stream

Karate Club is a new unsupervised machine learning extension library for Python & NetworkX with an inspired name

Human Disease Network from Anjushree Shankar, featured as Tableau’s Viz of the Day last Monday

Nodes

The speakers and talks at the (online, obv.) GraphQL Summit were of a consistently high quality. In particular I loved Ashi Krishnan’s coverage of federation, in short the blending of distinct GraphQL schemas into a unified index. The talk was equally interesting and mesmerizing as they included animated visuals built using 3d-force-graph to bring the content to life. I felt the visuals really helped to allow time for the technical content to breathe.

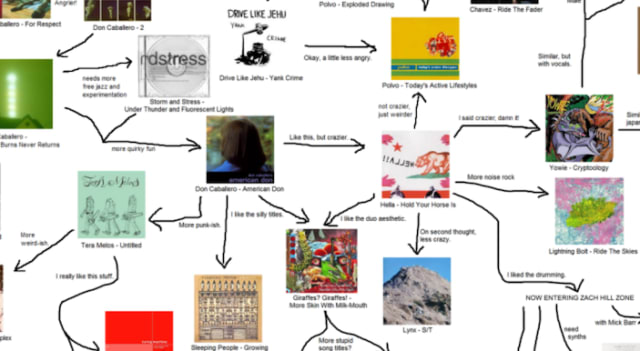

I stumbled-upon this “use this to get your friends into Math Rock” graph on Reddit this week. I’m not sure of the ultimate source but I love the mix of structured links with irreverent commentary.

I spend a lot of time procrastinating. A lot of that procrastination time is spent wondering whether the software I use is the absolute best for the task in hand. In the olden days when “email” didn’t largely just mean “Gmail”, I used to spend a lot of this time trialing and testing various email clients to ensure my workflow for triaging my inbox and sending emails was as ~efficient~ as possible. Yes, I’m aware this was a problem.

A particular favorite of mine was (and probably remains) Mozilla Thunderbird; appealing due to the rich ecosystem of plugins, each one promising a “level-up” of capability unmatched by your Outlook Expresses of the day.

Depressing news this week of 250 layoffs at Mozilla. If you’re hiring check out the directory of Mozillians looking for a new role.

Multi-person threads of cc-ing, fwd-ing, bcc-ing, re-ing & reply-all-ing are almost a literal nightmare for me—especially when Gmail sputters to a halt when attempting to show me chains of 30+ messages.

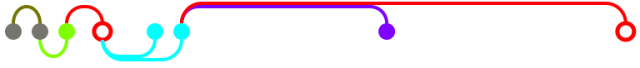

Threadvis brought a little bit of calm to these frayed threads and embeds an interactive graph of your current email thread nestled between your inbox and message pane.

All that’s to point you to this interesting post from Ryan Bell on the Wolfram Community forum effectively re-creating an alternative Threadvis in Mathematica.

Thank you for making it all the way to the very end. While you’re here why not forward this to a friend? Full newsletter archives are available for delayed consumption.

See you in a few weeks! Anyone else looking at the calendar and shaking their head in disbelief that it’s basically September?