Her dog was by her side; a blur of gray fur with a red bandana draped loosely at the neck. Should I ask her? She’d probably think I’m hitting on her? Or at least think it’s some sort of scam. I guess that’s what I’d think. After rattling around in my head, the question had finally taken shape:

“Would you mind taking a picture of this goose and emailing it to me?”

The goose was nestled at the edge of the cliff, mostly obscured in the long grass. It was gazing out over the ocean with an expression of pure… just kidding, I had no idea what it was thinking. Geese are notoriously aggressive; ready to hiss and stomp and chase at a moment’s notice. Canada Geese have been known to risk death to protect their offspring.

But this goose was… pensive? It looked over the strait and into the misty distance where it merged with the mottled, inky-gray sky. Far below, I could just about spot two ducks on the beach.

The woman had reached me. I opened my mouth and before I could say anything she smiled. I thought of how I looked. Some random guy in running clothes, slightly damp from the rain, staring at a goose, staring at the ocean. My agape mouth morphed into a smile and before I knew it she was past me. I had missed my chance.

When I run I don’t carry my phone. My internet-addled brain could do with some time away from screens, from notifications, from little red dots demanding my attention. I get a bit of flak for this, especially on runs where it’s getting dark and I’m a little lost and I’m back much later than I said I would be.

This does mean I miss out on taking photos of weird little scenes like the goose. Here’s my artist’s impression of the scene. I hope it brings some of that magic moment to life.

Humans are prone to spot patterns in the noise of their day-to-day life, it’s called frequency bias. I thought back to all the goose encounters I’d had this year – I was suddenly much more aware of them than, perhaps, ever in my life.

I had seen them blocking a patient line of traffic with an adorable gaggle of goslings. Plonked defiantly on the top of a bus as a driver struggled to chase them away. I had been surprised by one hissing at me as I ran too close on a path.

The Goose on the Cliff was an archetypal Canada Goose. You’re probably already picturing its black and white head and muddy gray body planted into the ground with stocky, scaly feet. The Provincial Museum handbook “The Birds of British Columbia: №6 Waterfowl,” first printed in 1958, reliably informs me that there are at least four subspecies of Canada Goose (Branta canadensis):

- honker

- western

- lesser

- cackler

but I’d be damned if I could tell you the difference.

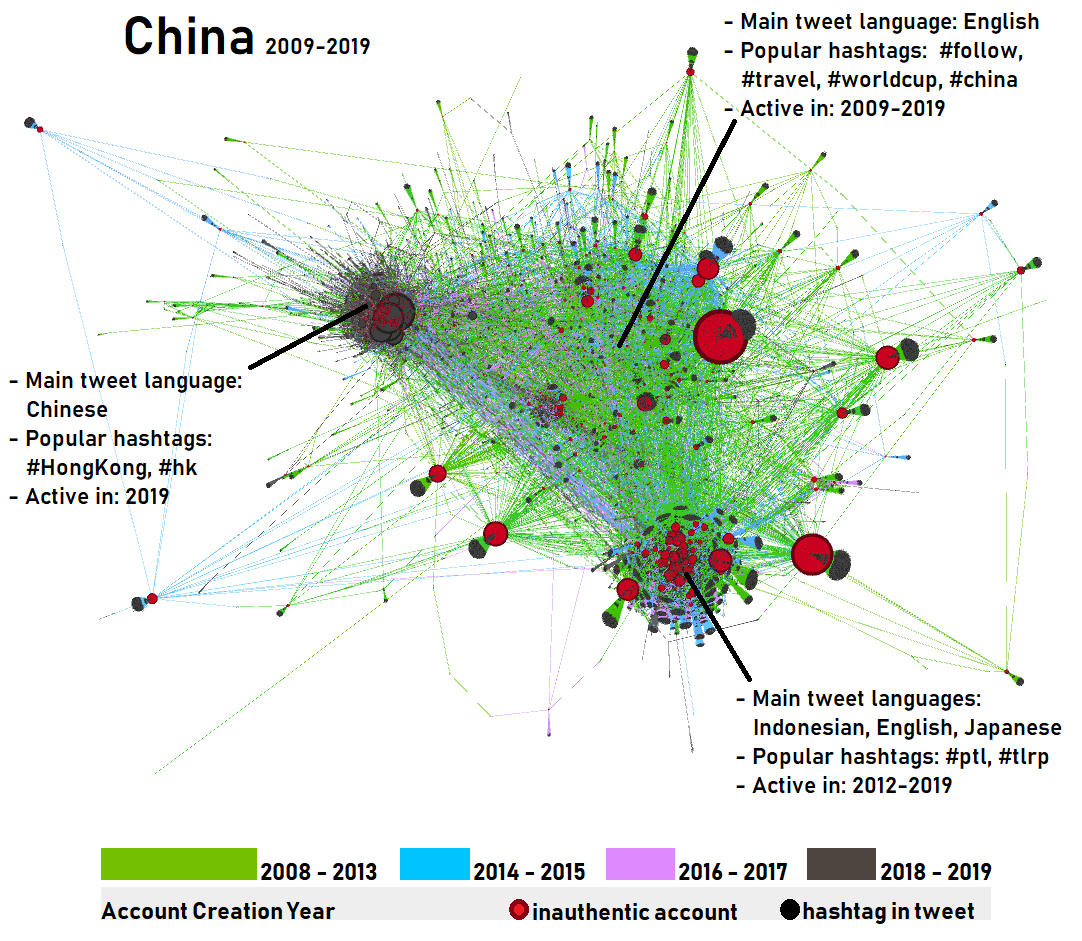

A circular tree-like structure with various species of geese around the edge of the network - labelled "a". It's clear they're all connected in some way. There's also a histogram plot labelled "b". The caption says: a Neighbour-joining Network of the True Geese using the ordinary least squares method (with default settings) in SplitsTree version 4.1.4.2 [15], based on genetic distances. b The comparison of degree distributions indicates that the Anser-network is more complex compared to the Branta-network as it contains relatively more nodes with four and five edges. Drawings used with permission of Handbook of Birds of the World.](https://sourcetarget.email/optim/assets/st44/goose-family-tree.png)

Known, in part, for the impact of their defecation on otherwise beautiful, swimmable lakes, the Canada Goose doesn’t always migrate. Environmental changes such as losing a nest can lead them to fly thousands of miles with surprising alacrity: some have been known to fly 1,500 miles in just 24 hours. A protected species, they can be found across all the provinces and territories in Canada.

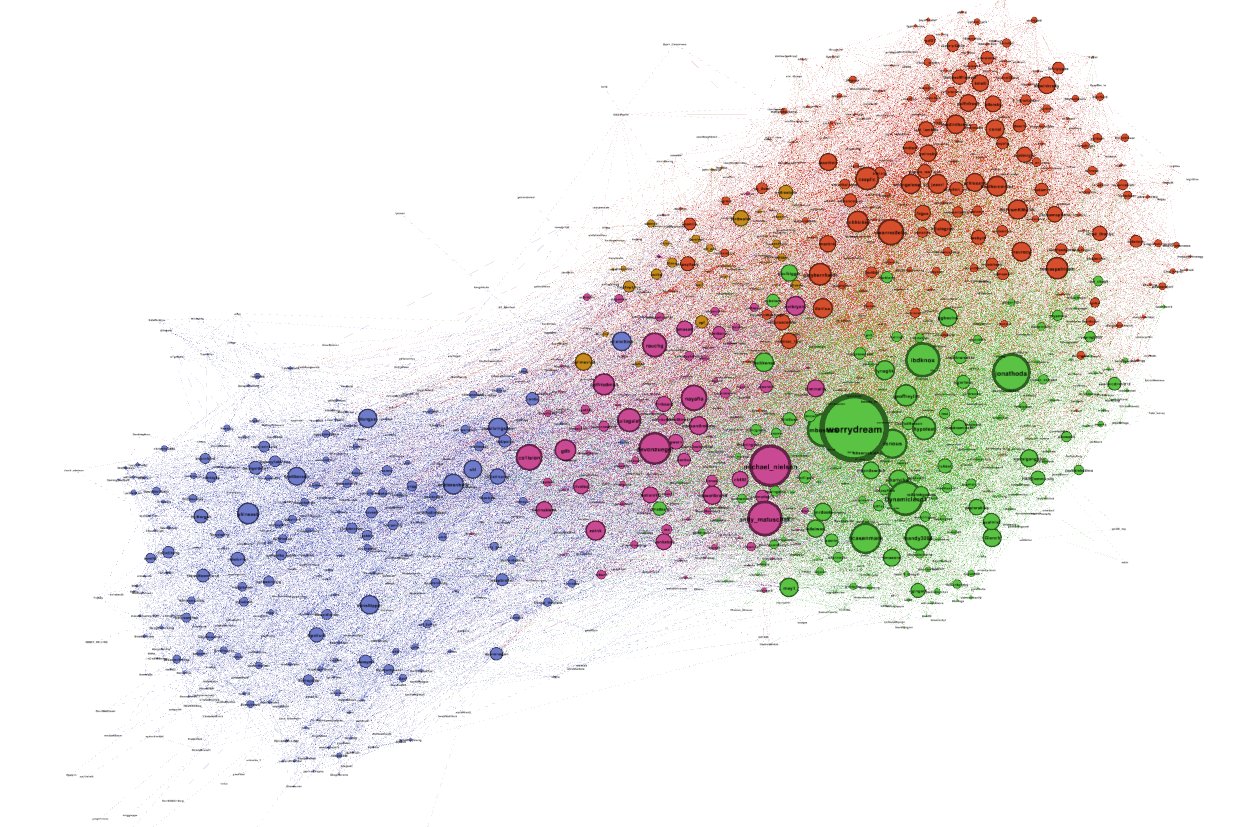

A black and white map of the Eastern US and Canada with arrows showing where a number of geese were released and where they ended up. Most travel for thousands of kilometres from states such as New York and Philadelpha up to Northern Quebec. The Legend shows that the different geese were "banded" in 2001 and 2003.](https://sourcetarget.email/optim/assets/st44/goose-migration.png)

The grass around The Goose on the Cliff was flattened. Perhaps it was nesting and set to be joined by a number of offspring in the coming days.

Canada Goose young are “precocial”, which means they are born in an advanced state, ready to forage and feed themselves on tender shoots and insects. I would soon see many goslings in the nearby park, enlarged ducklings and tufty teens.

Within a few months they will be ready to migrate with their parents.

My goose awakening of 2022 was kicked off by a surprising first appearance.

As a recent transplant to the West Coast the menagerie of ocean life is still novel to me. Seals carrying salmon home to their litter, squid picked up by their beak by a beach bum in a baseball cap, seagulls picking up crabs and dropping them from great heights to smash their shells to access the tasty treat inside, starfish in shallow tide pools…

One species I have a slightly repulsed, distant respect for are the prehistoric barnacles I see clinging to the rocks at low tide.

Barnacles start their life as larvae floating in the ocean, seeking a spot to land and make their home. Once ready they secrete a cement to affix themselves to a rock, boat or animal. The creature then forms a calcite shell made up of a collection of interlocking plates. These are adjustable, acting as a sort of garage door that opens or closes depending on the tide or if a predator is near.

After a hike on beautiful Sandcut beach on the south-west side of Vancouver Island, I was inspired to flick through another British Columbia Provincial Museum handbook on barnacles (№7). Therein I spotted an illustration of the exact stalked barnacle I had found on the beach: Goose or gooseneck barnacles. Eaten by First Nations communities for centuries and considered a delicacy in Europe, they make a prehistoric, otherworldly impression with their flesh and scales.

.jpg) A number of goose barnacles in a cluster pointing out from the left to the right. They have a hard, gray pincer-like shell with a dark center.](https://sourcetarget.email/optim/assets/st44/goose-barnacles.jpg)

I assumed the naming was simply borne of a visual similarity – the barnacles are indeed reminiscent of the gray and white neck of the goose. But in 12th century Europe the ties were deeper: the nesting and migratory patterns of the Brant Goose led observers to the dubious logical conclusion that the two had to be one and the same creature.

Page 8 of the barnacle handbook contained a similar illustration from the same era, depicting the curious growth of geese as fruit from the branches of some sort of fabled barnacle tree. This created confusion about whether geese should be classified as plant or animal: something of intense religious importance to dietary observances on holy days. If a goose was basically a tree then surely it could be eaten on days that meat was forbidden?

Charles Darwin of “On the Origin of Species” fame, more recently of source/target fame, originally dedicated his time to researching the varieties of our humble barnacle. His work in 1859 ultimately severed the barnacle ←→ goose connection.Thankfully, we still have lots of medieval art to show for it.

There’s a name for when barnacles and other microorganisms attach themselves to human vessels or structures: biofouling. Unsurprisingly for a human word, there’s an implicit conflict in the name. These creatures are causing costly drag in boats and ruining our nice paintwork. The term leaves no room for the reality that we’re just passing through a world that barnacles have been cementing themselves to for over three-hundred million years.

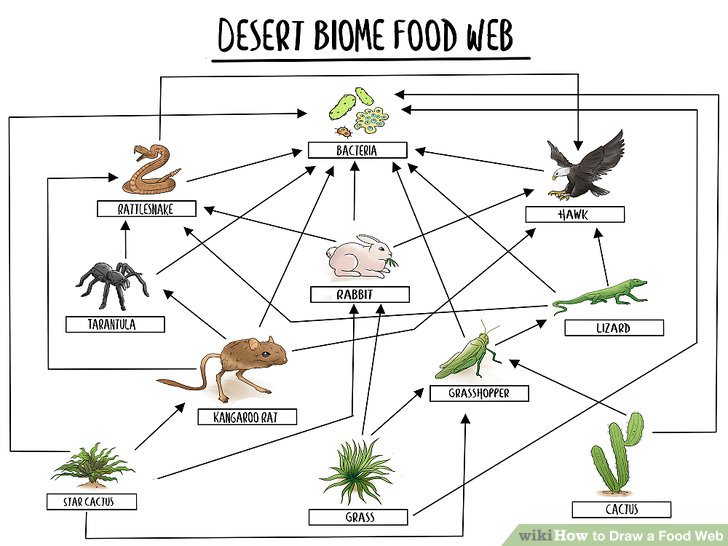

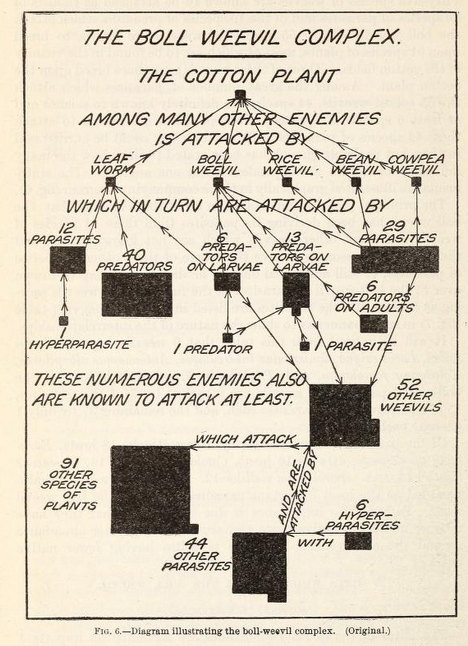

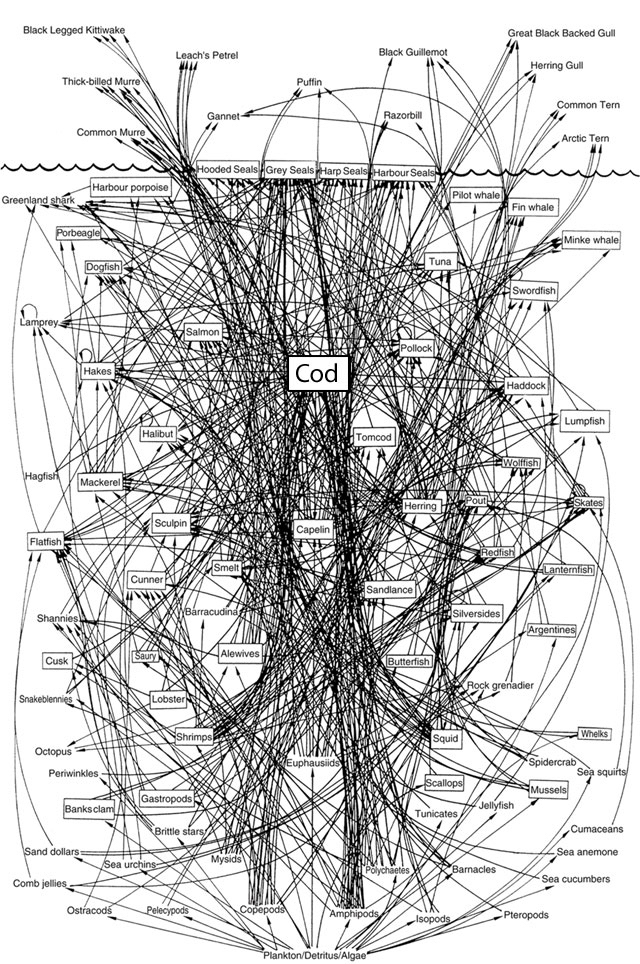

Ocean scientist Juli Berwald’s article The Web of Life turns a critical eye to what is popularly known as the Tree of Life, derived from Darwin’s work.

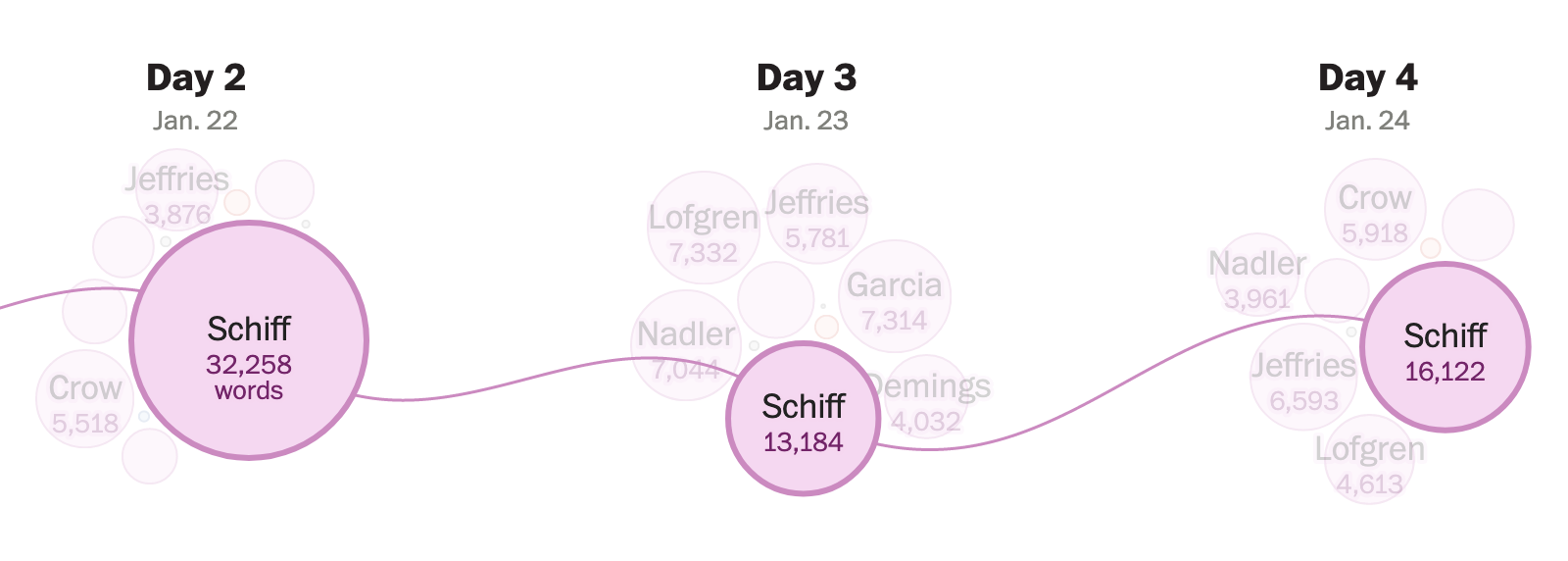

A complex family tree of various species of fish. The fish are little orange things but many of them have dark gray patterns in different forms. There are many complex scientific names for them, for example "X. variatus" and "Pseudoxiphophorus jonesii." There's a deep nest of origins on the left hand side in green which points to various slightly-different looking fish shown to be at various locations in the Gulf of Mexico. The green tree of life has a strange structure to it, like there's lots of cross-pollination between the branches.](https://sourcetarget.email/optim/assets/st44/tree-of-life.png)

Staring at the figure, something clicked for me: every individual and every population contains many genes, each with its own evolutionary history and gene tree. All those gene trees will always result in disagreements, in fuzziness, in reticulation. Vernon had been looking at the forest every time he looked at an individual.

… today’s corals are a product of Darwin’s classical natural selection when [ocean] currents are slack, and of hybridisation when they are strong. Species separate and merge, and more so over long expanses of time and space.

[What we think of as] “species” are not units, they are bits of a continua. What comprises a “bit” is arbitrary – a taxonomist’s opinion. Arbitrary, and in truth forever variable in space and time.

Some barnacles are destined for bigger things. Whale barnacles are an elusive form of acorn barnacle that manage to affix themselves to a convenient part of their namesake mammal. By alighting on the chin or forehead of whales they’re in prime position to collect plankton from their gargantuan host.

Whales have been known to accumulate 450kg of barnacles – a mass that seems significant but pales in comparison to the weight of the whale itself.

Whale barnacles can grow to be the size of a small orange and we’re unable to collect specimens without damaging the whale’s skin. Humans have therefore struggled to research whale barnacles; in the rare cases where live specimens are found on beached whales, researchers have only managed to keep them alive for a few short weeks.

“If you’re into barnacles, they’re pretty extraordinary”

— Michael Moore (veterinary scientist at Woods Hole Oceanographic Institution)

This doesn’t mean that scientists haven’t been creative. In one fascinating study, researchers took microscopic drill bits to the calcium shell of whale barnacle fossils in order to extract samples from a range of different depths.

Just like the rings of a tree trunk show the relative growth of the tree, the biological makeup of these samples reveal a faint but definitive backstory of whale migration. A “well-preserved whale barnacle is the perfect time-traveling tracking device”:

Here’s why that’s cool: when in their evolutionary history baleen whales started migrating remains an open question.

One hypothesis suggests that it happened around three million years ago, when massive ice sheets started spreading across much of the northern hemisphere. The colder temperatures would have frozen whales out of some of their habitats and put more constraints on where plankton could flourish in Earth’s oceans. And that would encourage the whales to start making longer and more directed journeys to seek out shelter and food.

A fossilized whale barnacle is a unique window into this behavior in a whale that’s been dead for hundreds of thousands of years.

The Goose on the Cliff was keeping a beady eye on the passing dog, its neck contorted in an impossibly uncomfortable kink. We weren’t far from where I’d seen a pod of whales one sunrise late last year.

What leads you to find a place to settle, to put down cement roots and attach yourself to something, somewhere?

And what brings you to build your plates, your armor, your exterior shell, hardened and tessellated shut, in preparation for the oncoming… what?

Perhaps you’re the boat, untended and unmaintained, carting around extra weight you neither appreciate or benefit from?

Or are you the whale, encrusted and entrusted to bear an undeniable burden, a secret pact in the ocean’s depths.

This week’s edition was inspired in part by Undrowned by Alexis Pauline Gumbs and Busy Doing Nothing by Rekka Bellum and Devine Lu Linvega. I recommend them both.

After two and a half years, source/target is taking a few months off for summer – I’ll see you again in September.

]]>

A scan of a page with a crude pen drawing of a tree. The words "I think" are clear at the top but the rest is hard to read.](https://sourcetarget.email/optim/assets/st42/darwin.webp)

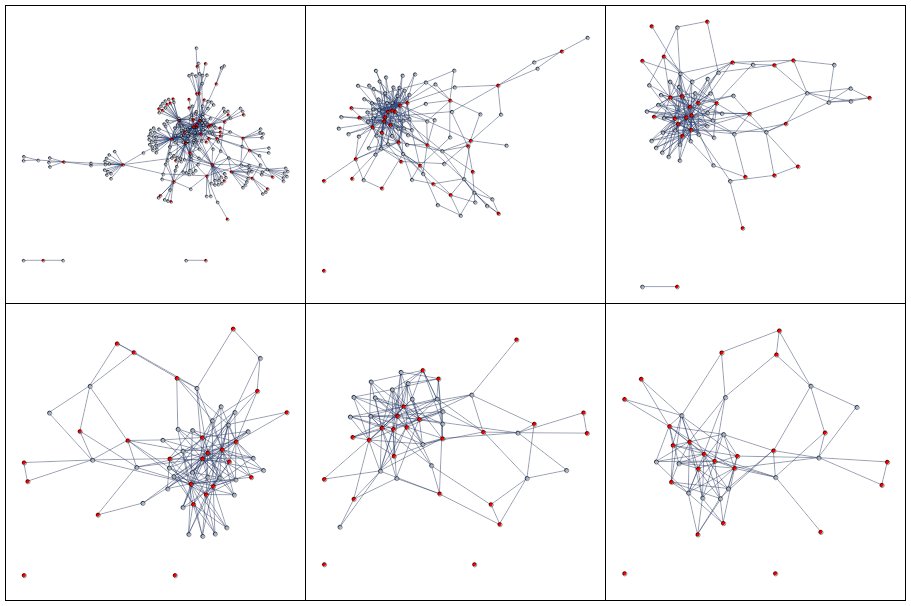

Three graphs, the original sociograms and two more modern graphs with colors.](https://sourcetarget.email/optim/assets/st41/moreno.png)

Another map, this time showing a lot of overlapping red links and dots that indicate the travel path of grey seals in the ocean.](https://sourcetarget.email/optim/assets/st39/whalenet.png)

Two ships firing water cannons at another ship that has a lot of smoke pouring out of it. The smokey ship is a container ship and has lots of containers on it's deck.](https://sourcetarget.email/optim/assets/st39/zim.jpg)

A very complex image of the evolutionary tree of a wide array of crabs.](https://sourcetarget.email/optim/assets/st39/crab-tree-of-life.jpg)

A screenshot of a Tweet that shows a flight above an ocean where the plane has traced the path of a crab](https://sourcetarget.email/optim/assets/st39/crab-track.png)

Tweet from Zack Leatherman that reads "surprising news: if you consistently do a bunch of little things over a long period of time—somehow, at the end, when you look back—it will look like you’ve done a big thing"](https://sourcetarget.email/optim/assets/st38/tweet.png)

] A screenshot from the game Age of Empires. Shows a building in the middle of a green patch of land surrounded by black nothingness.](https://sourcetarget.email/optim/assets/st38/aoe.png)

, a professor in [Computer Science](http://www.cs.utah.edu/) at the [University of Utah](http://www.utah.edu/), created [The Illustrated Guide to a Ph.D.](http://matt.might.net/articles/phd-school-in-pictures/) to explain what a Ph.D. is to new and aspiring graduate students. [Matt has licensed the guide for sharing with [special terms under the Creative Commons license](http://matt.might.net/articles/phd-school-in-pictures/#license).] Some basic drawings to illustrate the following text: Imagine a circle that contains all of human knowledge, By the time you finish elementary school, you know a little, By the time you finish high school, you know a bit more, With a bachelor's degree, you gain a specialty, A master's degree deepens that specialty, Reading research papers takes you to the edge of human knowledge, Once you're at the boundary, you focus, You push at the boundary for a few years, Until one day, the boundary gives way, And, that dent you've made is called a Ph.D., Of course, the world looks different to you now, So, don't forget the bigger picture, Keep pushing.](https://sourcetarget.email/optim/assets/st37/might.jpg)

A tweet with a picture of the Ever Given containership blocking the Suez canal with the caption "Define Betweenness Centrality. Posted in March this year, 11 retweets and 64 likes."](https://sourcetarget.email/optim/assets/st36/evergiven.png)

. - [source](https://web.archive.org/web/20170819082639/http://www.indianajones.de:80/multimedia/texte/Indiana_Jones_Wallpaper_2.php) A videogame screenshot of Harrison Ford as Indiana Jones. He's a blocky video game character facing the camera with a linen shirt, holding a whip about to strike. It says POWERD BY www.indianajones.de in the bottom left hand corner.](https://sourcetarget.email/optim/assets/st36/whip.jpg)

A 3D render of the Earth with overlayed cables showing how fibre optic cables connect countries. Earth Submarine Fiber Optic Cable Network – Network Stylized for Clarity, actual physical routes not shown. Created with rayrender. Data: github.com/telegeography/www.submarinecablemap.com. Twitter: @tylermorganwall](https://sourcetarget.email/optim/assets/st36/submarine.png)

A dense network graph with three different colours encoding the timespan each athlete started competing. The takeaway is that those who started competing after civilians were allowed in 1952 transition out of the sport quite quickly.](/optim/assets/st35/dressage.jpg)

A complete network with a subset coloured in purple representing Michael Phelps and all those he swam against directly. There are a few nodes colored silver, bronze and gold to represent medal wins. Phelps is a key player, shown by a larger node in the center of the network.](/optim/assets/st35/swimming.png)

An example topological diagram depicting a path that can be taken for rock climbing. It has a hand-drawn quality and there's a detailed legend for reference. There's lots of small labels in the diagram with hardness ratings and other context.](/optim/assets/st35/climbing.png)

Two plots depicting badminton strokes. The first has a number of circles showing the exact location strokes were made on a badminton court. The second gives a summary of the source and target positions for each of the strokes.](/optim/assets/st35/badminton.png)

Picture of a robot at the olympic games holding a basketball, ready to shoot. It's kinda scary.](/optim/assets/st35/basketball.jpg)

Photo of a cockroach. There are watermarks on the screen and it's clear that it's from Olympic TV coverage.](/optim/assets/st35/cockroach.jpg)

A photo of a little autonomous vehicle with "Tokyo 2020" on the side. It's not clear what it's doing in this photo but it's pretty cute.](/optim/assets/st35/robot.png)

] A stack of 5 books by Edward Tufte](/optim/assets/st34/tufte-books.jpg)

] Four maps with the migration patterns of different duck species](/optim/assets/st34/ducks.jpg)

] Various hive plots on a dark background](/optim/assets/st34/hiveplots.png)

A photo of a red, 8-sided road stop sign with another small sign below noting that the original sign is a stop sign.](/optim/assets/st33/stop.png)

A comic from XKCD poking fun at infographics. It suggests that long, tall informational posters will be the only form of information in the future.](/optim/assets/st33/xkcd-1273.png)

] A grid of thumbnails showing various information visualizations with a strong emphasis on network representations](/optim/assets/st32/vc.jpg)

] A white framed canvas on a wall that says "I miss my pre-internet brain" with a chair in front of it.](/optim/assets/st32/coupland.jpg)

has a good introduction A screenshot of a network graph showing nodes for each user profile.](/optim/assets/st32/clubhouse.jpg)

] A snippet of a screenshot showing various lines overlayed on a map of California. The direction of the line reflects the airport runway at that location. Many are horizontal.](/optim/assets/st32/trails.png)

] A color wheel with many emoji hearts overlaid at the position they match the wheel. There's a wide variance in values for hearts of the "same" color, especially pronounced for the green and purple hearts.](/optim/assets/st32/emoji.jpg)

] A stylized map of the town of Königsberg, now known as Kaliningrad.](/optim/assets/st31/1.png)

] An animated gif of post office locations in the USA incrementally added. You can trace the growth West.](/images/assets/st31/3.gif)

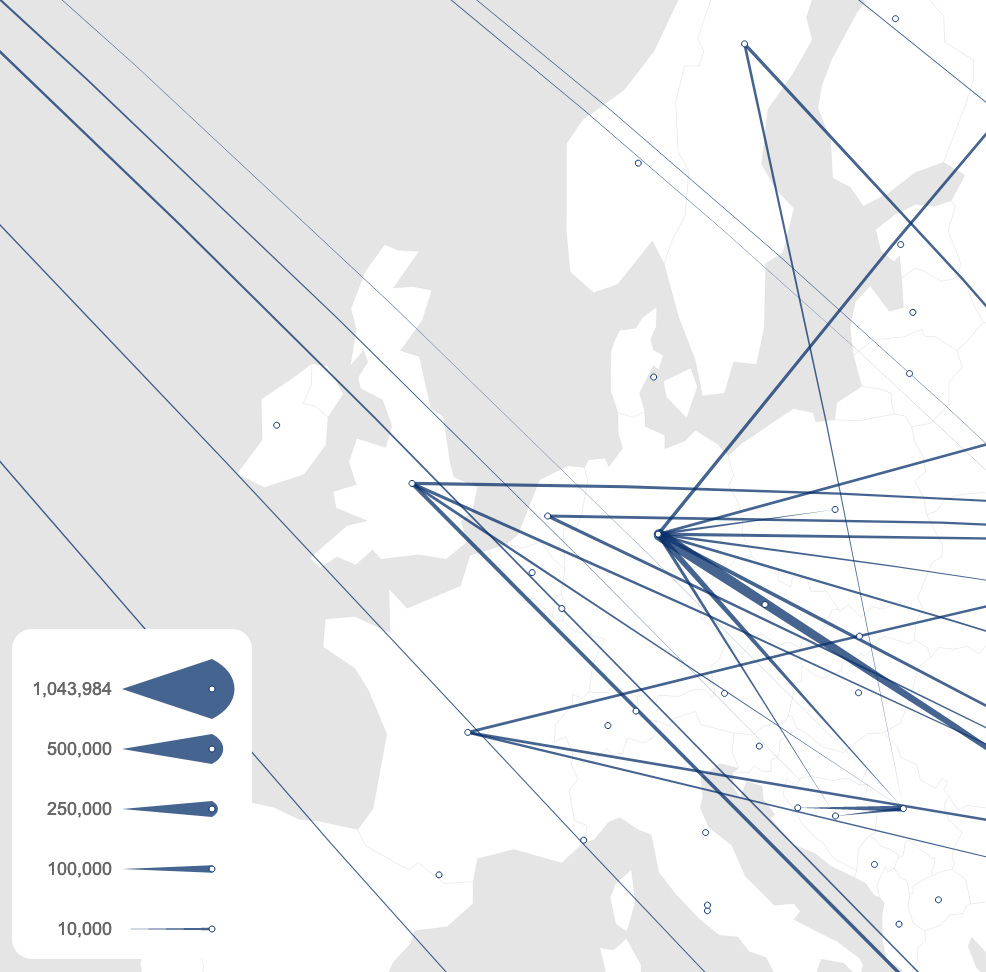

] A origin-destination map with lines representing letters being sent between various locations in the USA.](/optim/assets/st31/4.jpg)

this thing out. It's amazing. A screenshot from the Map of Reddit project showing various country-like formations with nodes representing subreddits inside](/optim/assets/st31/5.png)

] A summarized map of the city of Boston drawn from memory](/optim/assets/st31/6.jpg)

and [without](https://cjlm.dev/cryptopunks/accessories?links=1) links. A dense cluster of CryptoPunk images connected by their accessories](/optim/assets/st30/3.png)

and all the CryptoPunk transaction graphs. A close up transaction graph of CryptoPunk 6695, originally worth 0.28 ETH and now worth 9.90 ETH](/optim/assets/st30/6.png)

all the CryptoPunk transaction graphs. A more complex transaction graph for CryptoPunk #4261](/optim/assets/st30/7.png)

]](/optim/assets/st29/tulips.jpg)

](/optim/assets/st26/large/cancer.png)

](/optim/assets/st25/barter.png) ]

]

](/optim/assets/st17/3.png)

: a visual zeitgeist for the second half of 2020.](https://cjlm.ca/images/projects/hyhl.png)

, Figure 1, 1937](/optim/assets/st23/twi.png)

]

]

](/optim/assets/st21/ingredients.png)

]

]

]](/optim/assets/st19/rnartist.jpg)

](/optim/assets/st19/bandage.png)

exploring gender portrayal in film.](/optim/assets/st16/3.png)

]](/optim/assets/st15/6.png)

]](/optim/assets/st15/5.png)

...](/optim/assets/st13/1.png)

Fast.Co Design). Incidentally I just published my (non-skeuomorphic) reading list – check it out [here](https://cjlm.ca/reading-list/)](/optim/assets/st13/2.jpg)

]](/optim/assets/st13/5.png)

Visualization by

Visualization by